The reading group discusses (mostly recent) papers about theoretical machine learning and algorithms that aim to learn structured, compressed and/or interpretable latent representations of observations in a principled way, often with implications not only for machine learning, but also neuroscience and cognitive science.

The group is open for anyone, but operates under the assumption that participants know the basic tenets of unsupervised learning and probability theory and have read the paper assigned for the meeting.

Events of the group are advertised on a mailing list. If you wish to be on this list or have any inquiries about the series, contact Mihály Bányai.

Upcoming meeting:

Papers to be discussed:

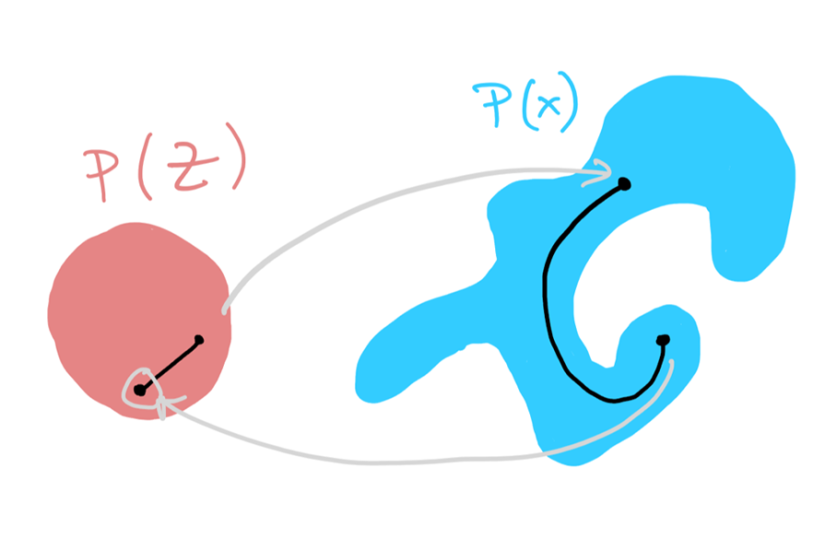

Song, Y., Sohl-Dickstein, J., Kingma, D. P., Kumar, A., Ermon, S., & Poole, B. (2020). Score-based generative modeling through stochastic differential equations. arXiv preprint arXiv:2011.13456.

and

Kadkhodaie, Z., & Simoncelli, E. (2021). Stochastic solutions for linear inverse problems using the prior implicit in a denoiser. Advances in Neural Information Processing Systems, 34, 13242-13254.

Time: 17:00, 17 April 2024.

Location: CEU, 1051 Bp. Nádor u. 15, Room 104.

Zoom link will be sent around on the mailing list a couple of days ahead, if missed it ask Mihály Bányai.